Some of you may be confused why someone other than Brett is writing the Part 2 for this series (click here for Part 1). There are two reasons for this:

- I couldn’t let Brett have all the fun

- I’m way more fun than Brett anyway, so you’re welcome!

All kidding aside, I already had a few posts in the hopper, so we decided to team up to create this series. It’s the Brett and Megan show, and you’re all invited! Buckle up and enjoy Part 2.

Overview

Recently, I performed security testing for a web application that allowed people to collaboratively fill out paperwork. During this test, I came across an interesting Stored Cross-Site Scripting (Stored XSS) vulnerability that highlights the importance of sanitizing user input, and of performing proper input validation. Although improper input validation is a common and not-so-sexy vulnerability it can often be used to build other attacks. In this blog post, I will share an example of what can happen when input validation is done poorly.

Discovery

During my walk-through of the web application, I noticed users could submit one or more email addresses for people that they would like to have collaborate with them on their paperwork. The web application then generated invitation emails that were sent to the contact list specified by the applicant. I was interested in whether or not it would be possible to use this interaction to do something malicious.

Using Burp Suite, I inspected the HTTP POST request used to invite someone to collaborate. I decided to try adding some simple script tags to the “lastNm” field, just so see what would happen. The request and response are shown below. Please note that, for the sake of this blog post, I will refer to the client’s domain as example[.]org.

POST request used to add a reference:

POST /addCollaborator.php HTTP/1.1 Host: example[.]org Accept: text/html Accept-Encoding: gzip, deflate Content-Type: application/x-www-form-urlencoded Content-Length: 286 Origin: https://foo[.]net Connection: close Cookie: PHPSESSID=[redacted] firstNm=Michael&lastNm=Smith%3Cscript%3Ealert%281%29%3C%2Fscript%3E&email=[redacted] Response showing script tags:

HTTP/1.1 200 OK

Date: Sun, 26 Jan 2020 20:17:50 GMT Server: Apache Strict-Transport-Security: max-age = 31536000; includeSubDomains Expires: Thu, 19 Nov 1981 08:52:00 GMT Cache-Control: no-store, no-cache, must-revalidate Pragma: no-cache X-Frame-Options: SAMEORIGIN Vary: Accept-Encoding Content-Secure-Policy: default-src 'self';X-Content-Type-Options: nosniff Content-Length: 23955 Connection: close Content-Type: text/html; charset=UTF-8 <!DOCTYPE html> <html lang="en"> [truncated] <span class=”reference-name”>Michael

Smith<script>alert(1)</script></span>

[truncated] You will notice that, from the sender’s web page, the script tags are properly HTML-encoded to ensure that any HTML sent to the application wont render as code in the browser. Somewhat disappointed (I had hoped for an easy win with a Reflected Cross-Site Scripting attack), I continued on with my testing.

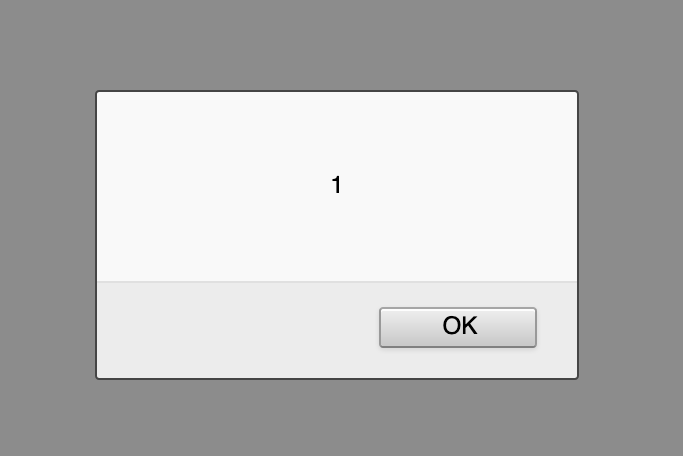

After opening the link that was emailed to the collaborator, I clicked on a link and logged into the referral portal. After logging in, I was greeted with an alert popup.

Hello old friend!!! The <script><alert>(1)</alert></script> from my POST request had popped in the collaborator’s web page.

After a quick happy-hacker-dance, I took a look at the page’s source code, and quickly identified the problem. Toward the top of the page, there was a <div> that my script tags had been inserted into, without HTML-encoding.

[truncated]

<div class=”hdr-applicant-name”>Welcome, Michael Smith<script>alert(1)</script></div>

[truncated]

An easy mistake to make, but one that could have big consequences. Stored Cross-Site Scripting (XSS) attacks can be nasty. Consequences can include session hijacking and information exposure, among other things.

In this case, it was clear to me what had gone wrong. The developers had been aware that they should sanitize user input, but they had not implemented the same checks in all locations within their site. Rather than sanitizing data on its way into the database, they had chosen to sanitize on the way out. Either solution can work, but the latter takes greater diligence.

By choosing to sanitize the data on its way out of the database (rather than on its way in), one must carefully ensure that all locations rendering the data do so in a secure way. That can be a tricky task! One slip-up in a browser, and those tags will pop.

Remediation:

For this application, and others, the best way to handle input validation is often before writing to the database. Where possible, applications should limit the characters or data types permitted for user input to the bare minimum. Do many people really need to use script tags in their last name? Probably not. If the system had rules in place limiting the ‘lastNm’ field to alphanumeric, hyphen, and apostrophe characters, for example, the script tags that I had inserted into the ‘lastNm’ field would have been rejected outright.

For some user input fields, limiting characters may not be an option. In those instances, properly encoding the characters into a safe format can make sense. Perhaps all characters that could be interpreted as code by a browser should be HTML-encoded before entering the database, for example. The specific implementation of input validation will vary from application to application, but it is extremely important that it is done well. A great resource to reference for more information is the OWASP Input Validation Cheat Sheet. It highlights a number of strategies that can be used to perform input validation.

Although input validation issues are neither new, nor novel attacks, they are rampant in the wild. By taking precaution when accepting and using input from users, a great number of serious (and way cooler) vulnerabilities can be prevented. Because of this, it’s an extremely important part of developing secure applications.